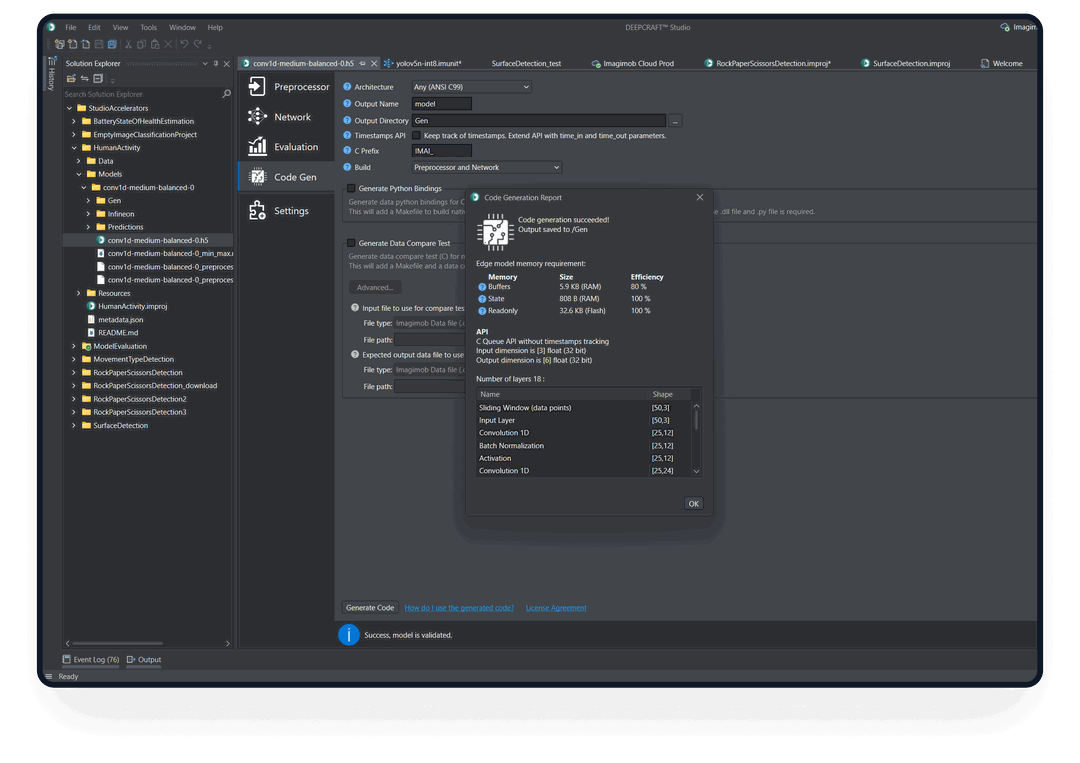

DEEPCRAFT™ Studio is a platform for developing machine learning models for edge devices.

DEEPCRAFT™ Studio is a platform for developing machine learning models for edge devices.

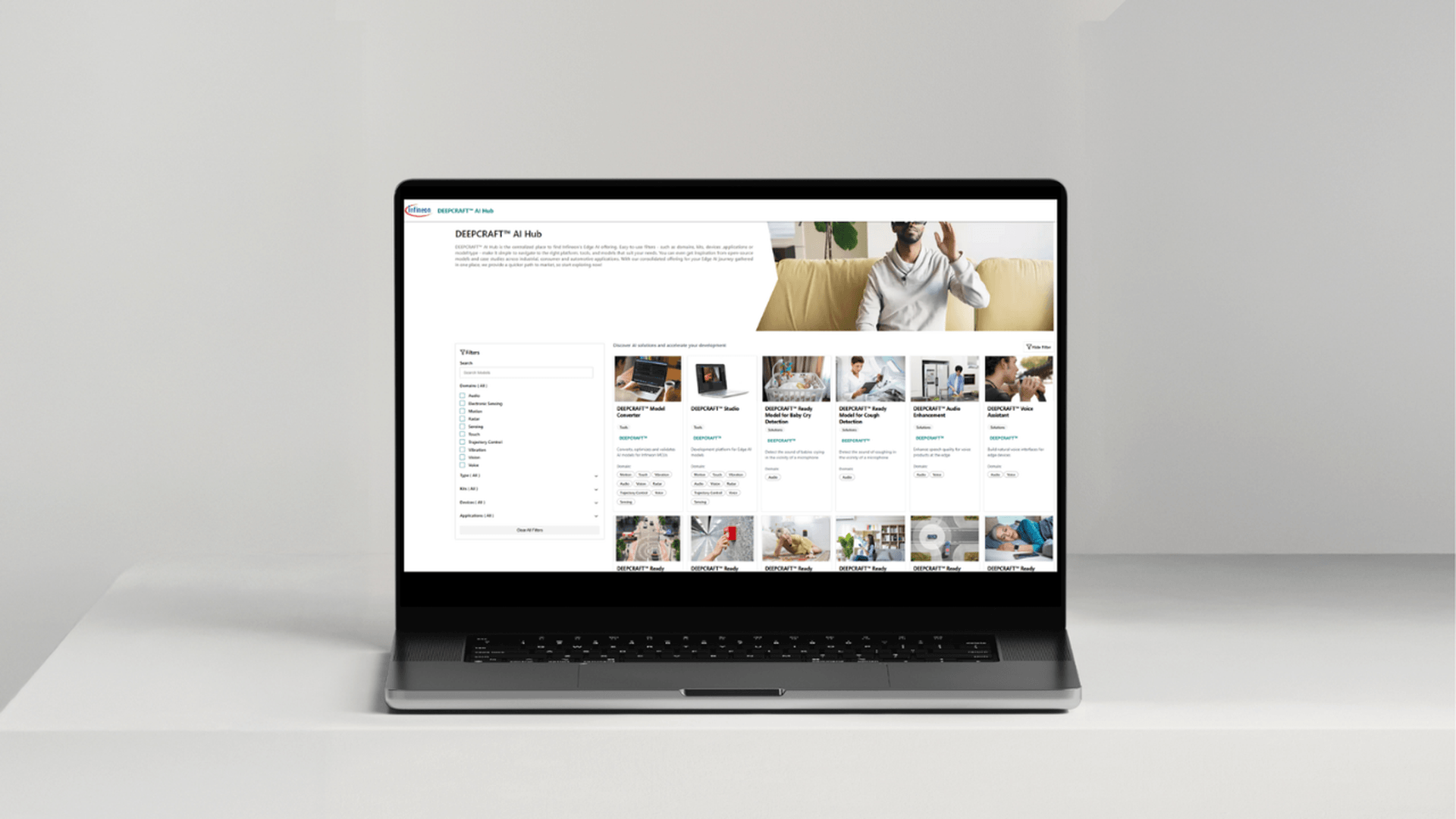

DEEPCRAFT™ Model Converter is a software application for converting, optimizing and validating AI models to run on Infineon MCUs.

DEEPCRAFT™ Ready Models are production-ready AI / Machine Learning (ML) models that are ready to be added to any edge device.

DEEPCRAFT™ Studio Accelerators are designed to inspire! Get datasets, preprocessing, model architecture and instructions to develop into custom models.

DEEPCRAFT™ Voice Assistant lets you create natural voice interface for products that runs on device, requires no network connectivity and achieves industry-leading power efficiency.

DEEPCRAFT™ Audio Enhancement turns real-world audio and the noise that comes with it into crystal-clear voice.

DEEPCRAFT™ AI Hub is the centralized place to find Infineon's Edge AI offering. Filter by domain, kit, device, application or content type, making it simple to navigate to the offering that suits your needs.

You know what the problem with today's machine learning (ML) is? It's reserved for the Cloud. It uses up too much memory and resources. This means it can't run on embedded hardware, even though that's where a lot of important data is generated.

Imagine what you could do if you could bring AI / ML into your embedded devices. You could do audio classification without any data ever leaving your embedded device. You could sense that a machine is about to break and turn it off in a few milliseconds. Even when the connection is down.

DEEPCRAFT™ Studio is the Edge AI development platform that allows you to do just that. It makes it easier than ever before to develop AI / ML models for edge devices, from data collection through deployment.